Do Mandatory Age Verification Laws Conflict with Biometric Privacy Laws?–Kuklinski v. Binance

California passed the California Age-Appropriate Design Code (AADC) nominally to protect children’s privacy, but at the same time, the AADC requires businesses to do an age “assurance” of all their users, children and adults alike. (Age “assurance” requires the business to distinguish children from adults, but the methodology to implement has many of the same characteristics as age verification–it just needs to be less precise for anyone who isn’t around the age of majority. I’ll treat the two as equivalent).

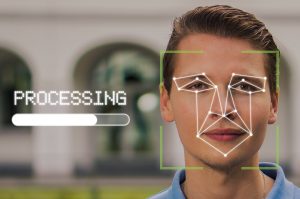

Doing age assurance/age verification raises substantial privacy risks. There are several ways of doing it, but the two primary options for quick results are (1) requiring consumers to submit government-issued documents, or (2) requiring consumers to submit to face scans that allow the algorithms to estimate the consumer’s age.

[Note: the differences between the two techniques may be legally inconsequential, because a service may want a confirmation that the person presenting the government documents is the person requesting access, which may essentially require a review of their face as well.]

But, are face scans really an option for age verification, or will it conflict with other privacy laws? In particular, face scanning seemingly directly conflict with biometric privacy laws, such as Illinois’ BIPA, which provide substantial restrictions on the collection, use, and retention of biometric information. (California’s Privacy Rights Act, CPRA, which the AADC supplements, also provides substantial protections for biometric information, which is classified as “sensitive” information). If a business purports to comply with the CA AADC by using face scans for age assurance, will that business simultaneously violate BIPA and other biometric privacy laws?

But, are face scans really an option for age verification, or will it conflict with other privacy laws? In particular, face scanning seemingly directly conflict with biometric privacy laws, such as Illinois’ BIPA, which provide substantial restrictions on the collection, use, and retention of biometric information. (California’s Privacy Rights Act, CPRA, which the AADC supplements, also provides substantial protections for biometric information, which is classified as “sensitive” information). If a business purports to comply with the CA AADC by using face scans for age assurance, will that business simultaneously violate BIPA and other biometric privacy laws?

Today’s case doesn’t answer the question, but boy, it’s a red flag.

The court summarizes BIPA Sec. 15(b):

Section 15(b) of the Act deals with informed consent and prohibits private entities from collecting, capturing, or otherwise obtaining a person’s biometric identifiers or information without the person’s informed written consent. In other words, the collection of biometric identifiers or information is barred unless the collector first informs the person “in writing of the specific purpose and length of term for which the data is being collected, stored, and used” and “receives a written release” from the person or his legally authorized representative

Right away, you probably spotted three potential issues:

- the presentation of a “written release” slows down the process. I’ve explained how slowing down access to a website can constitute an unconstitutional barrier to content.

- will an online clickthrough agreement satisfy the “written release” requirement? Per E-SIGN, the answer should be yes, but standard requirements for online contract formation are increasingly demanding more effort from consumers to signal their assent. In all likelihood, BIPA consent would require, at minimum, a two-click process to proceed. (Click 1 = consent to the BIPA disclosures. Click 2 = proceeding to the next step).

- Can minors consent on their own behalf? Usually contracts with minors are voidable by the minor, but even then, other courts have required the contracting process to be clear enough for minors to understand. That’s no easy feat when it relates to complicated and sensitive disclosures, such as those seeking consent to engage in biometric data collection. This raises the possibility that at least some minors can never consent to face scans on their own behalf, in which case it will be impossible to comply with BIPA with respect to those minors (and services won’t know which consumers are unable to self-consent until after they do the age assessment #InfiniteLoop).

[Another possible tension is whether the business can retain face scans, even with BIPA consent, in order to show that each user was authenticated if challenged in the future, or if the face scans need to be deleted immediately, regardless of consent, to comply with privacy concerns in the age verification law.]

The primary defendant at issue, Binance, is a cryptocurrency exchange. (There are two Binance entities at issue here, BCM and BAM, but BCM drops out of the case for lack of jurisdiction). Users creating an account had to go through an identity verification process run by Jumio. The court describes the process:

Jumio’s software…required taking images of a user’s driver’s license or other photo identification, along with a “selfie” of the user to capture, analyze and compare biometric data of the user’s facial features….

During the account creation process, Kuklinski entered his personal information, including his name, birthdate and home address. He was also prompted to review and accept a “Self-Directed Custodial Account Agreement” for an entity known as Prime Trust, LLC that had no reference to collection of any biometric data. Kuklinski was then prompted to take a photograph of his driver’s license or other state identification card. After submitting his driver’s license photo, Kuklinski was prompted to take a photograph of his face with the language popping up “Capture your Face” and “Center your face in the frame and follow the on-screen instructions.” When his face was close enough and positioned correctly within the provided oval, the screen flashed “Scanning completed.” The next screen stated, “Analyzing biometric data,” “Uploading your documents”, and “This should only take a couple of seconds, depending on your network connectivity.”

Allegedly, none of the Binance or Jumio legal documents make the BIPA-required disclosures.

The court rejects Binance’s (BAM) motion to dismiss:

- Financial institution. BIPA doesn’t apply to a GLBA-regulated financial institution, but Binance isn’t one of those.

- Choice of Law. BAM is based in California, so it argued CA law should apply. The court says no because CA law would foreclose the BIPA claim, plus some acts may have occurred in Illinois. Note: as a CA company, BAM will almost certainly need to comply with the CA AADC.

- Extraterritorial Application. “Kuklinski is an Illinois resident, and…BIPA was enacted to protect the rights of Illinois residents. Moreover, Kuklinski alleges that he downloaded the BAM application and created the BAM account while he was in Illinois.”

- Inadequate Pleading. BAM claimed the complaint lumped together BAM, BCM, and Jumio. The court says BIPA doesn’t have any heightened pleading standards.

- Unjust Enrichment. The court says this is linked to the BIPA claim.

Jumio’s motion to dismiss also goes nowhere:

- Retention Policy. Jumio says it now has a retention policy, but the court says that it may have been adopted too late and may not be sufficient,

- Prior Settlement. Jumio already settled a BIPA case, but the court says that only could protect Jumio before June 23, 2019.

- First Amendment. The court says the First Amendment argument against BIPA was rejected in Sosa v. Onfido and that decision was persuasive.

[The Sosa v. Onfido case also involved face-scanning identity verification for the service OfferUp. I wonder if the court would conduct the constitutional analysis differently if the defendant argued it had to engage with biometric information in order to comply with a different law, like the AADC?]

The court properly notes that this was only a motion to dismiss; defendants could still win later. Yet, this ruling highlights a few key issues:

1. If California requires age assurance and Illinois bans the primary methods of age assurance, there may be an inter-state conflict of laws that ought to support a Dormant Commerce Clause challenge. Plus, other states beyond Illinois have adopted their own unique biometric privacy laws, so interstate businesses are going to run into a state patchwork problem where it may be difficult or impossible to comply with all of the different laws.

2. More states are imposing age assurance/age verification requirements, including Utah and likely Arkansas. Often, like the CA AADC, those laws don’t specify how the assurance/verification should be done, leaving it to businesses to figure it out. But the legislatures’ silence on the process truly reflects their ignorance–the legislatures have no idea what technology will work to satisfy their requirements. It seems obvious that legislatures shouldn’t adopt requirements when they don’t know if and how they can be satisfied–or if satisfying the law will cause a different legal violation. Adopting a requirement that may be unfulfillable is legislative malpractice and ought to be evidence that the legislature lacked a rational basis for the law because they didn’t do even minimal diligence.

3. The clear tension between the CA AADC and biometric privacy is another indicator that the CA legislature lied to the public when it claimed the law would enhance children’s privacy.

4. I remain shocked by how many privacy policy experts and lawyers remain publicly quiet about age verification laws, or even tacitly support them, despite the OBVIOUS and SIGNIFICANT privacy problems they create. If you care about privacy, you should be extremely worried about the tsunami of age verification requirements being embraced around the country/globe. The invasiveness of those requirements could overwhelm and functionally moot most other efforts to protect consumer privacy.

5. Mandatory online age verification laws were universally struck down as unconstitutional in the 1990s and early 2000s. Legislatures are adopting them anyway, essentially ignoring the significant adverse caselaw. We are about to have a high-stakes society-wide reconciliation about this tension. Are online age verification requirements still unconstitutional 25 years later, or has something changed in the interim that makes them newly constitutional? The answer to that question will have an enormous impact on the future of the Internet. If the age verification requirements are now constitutional despite the legacy caselaw, legislatures will ensure that we are exposed to major privacy invasions everywhere we go on the Internet–and the countermoves of consumers and businesses will radically reshape the Internet, almost certainly for the worse.

Case citation: Kuklinksi v. Binance Capital Management Co., 2023 WL 2788654 (S.D. Ill. April 4, 2023)

Prior AADC coverage:

- Why I Think California’s Age-Appropriate Design Code (AADC) Is Unconstitutional

- Five Ways That the California Age-Appropriate Design Code (AADC/AB 2273) Is Radical Policy

- Some Memes About California’s Age-Appropriate Design Code (AB 2273)

- An Interview Regarding AB 2273/the California Age-Appropriate Design Code (AADC)

- Op-Ed: The Plan to Blow Up the Internet, Ostensibly to Protect Kids Online (Regarding AB 2273)

- A Short Explainer of How California’s Age-Appropriate Design Code Bill (AB2273) Would Break the Internet

- Will California Eliminate Anonymous Web Browsing? (Comments on CA AB 2273, The Age-Appropriate Design Code Act)

Pingback: Recent Case Highlights How Age Verification Laws May Directly Conflict With Biometric Privacy Laws | Techdirt()